Ghostart: Making decisions visible so writing sounds like a person wrote it

Most AI writing tools produce more content. We built one that produces better decisions.

Client: Ghostart

Challenge:

Professional content — LinkedIn posts, articles, newsletters, blogs — increasingly sounds the same. AI tools made it faster to produce but harder to distinguish. The problem wasn't generation speed. It was that nobody was making real decisions about what to say.

What we did:

Built a writing platform where AI surfaces the decisions a writer is already making — often without realising it — and helps them choose intentionally. The system learns how each person thinks, writes, and explains things, then gets smarter with use.

Outcome:

A platform where individuals write content that sounds distinctly like them, and teams produce quality work without constant senior oversight — because the system makes "what good looks like" visible, not dependent on a few people's judgement.

The broader problem

Professional content has a sameness problem. Open LinkedIn. Scroll. Three posts about "lessons learned from failure." Two about "the power of vulnerability in leadership." One about "why your morning routine matters." They could have been written by anyone. Most of them were written by the same AI.

This isn't just a social media problem. It shows up whenever people need to write professionally:

Articles that read like they were assembled from a template

Newsletters that sound like every other newsletter in the same industry

Blog posts where the writer's actual experience is nowhere to be found

Team content that gets escalated for review because nobody's sure if it's "right"

The tools that were supposed to help made it worse. AI writing assistants optimise for fluency, not distinctiveness. They produce text that sounds polished but says nothing specific. The result: more content, less meaning, and senior people still stuck reviewing everything.

Why "better AI" wasn't the answer

We'd spent years running employee advocacy programmes for organisations including John Lewis & Partners, Post Office, Waitrose, BT, Iceland Foods, River Island and The Big Issue.

The pattern was always the same. Give people templates, they sound corporate. Give them AI tools, they sound generic. Give them freedom, they freeze. The common thread wasn't the tool. It was the absence of clarity about what makes something worth reading.

The real issue was that quality lived in a few people's heads. When those people weren't available to review, everything either stalled or went out sounding safe and forgettable.

The real problem: invisible decisions

We traced the sameness problem to its root. It wasn't about writing ability or AI capability. It was about decision-making.

Good writing has visible decisions inside it

The writer chose to tell this story instead of that one. Chose to speak to this audience specifically. Chose a position that costs them something. Chose to sound like themselves instead of like a template.

Generic writing has no decisions visible

It could belong to anyone. It says things that are technically true but reveal nothing about who wrote it or why. The reader can't tell whether a human made a choice or an AI filled a gap.

This distinction — between content where decisions are visible and content where they aren't — became the foundation of everything we built.

How we approached it

We followed the same principle we use in all our work: help people act independently without lowering standards. But here, "standards" meant something specific — not grammatical correctness or brand compliance, but whether real decisions were showing through.

Built a decision-visibility framework, not a scoring system

Most writing tools assess quality after the fact. Grammar score: 92. Readability: Grade 8. SEO: Good. These numbers tell you nothing about whether the content is distinctive.

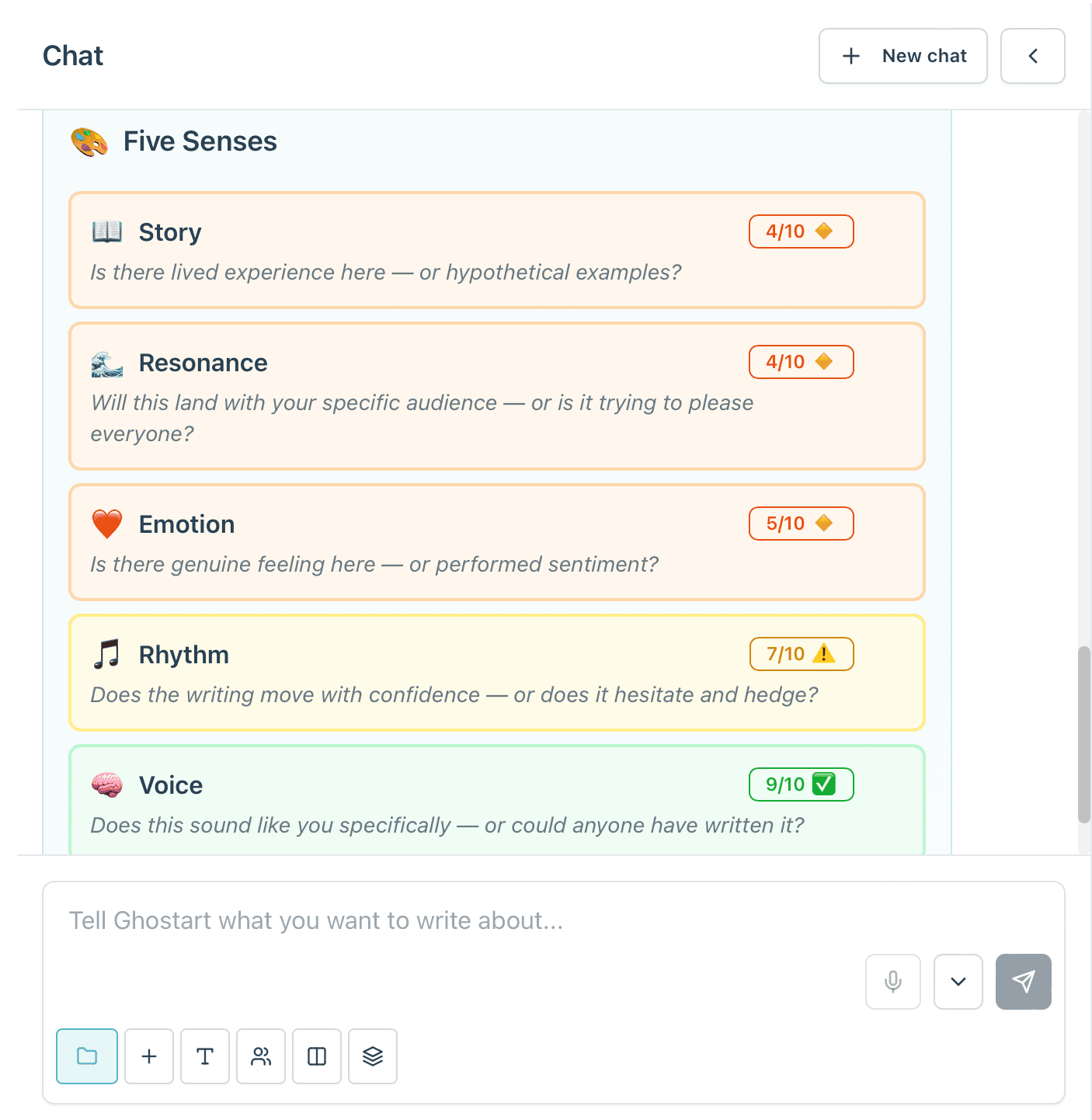

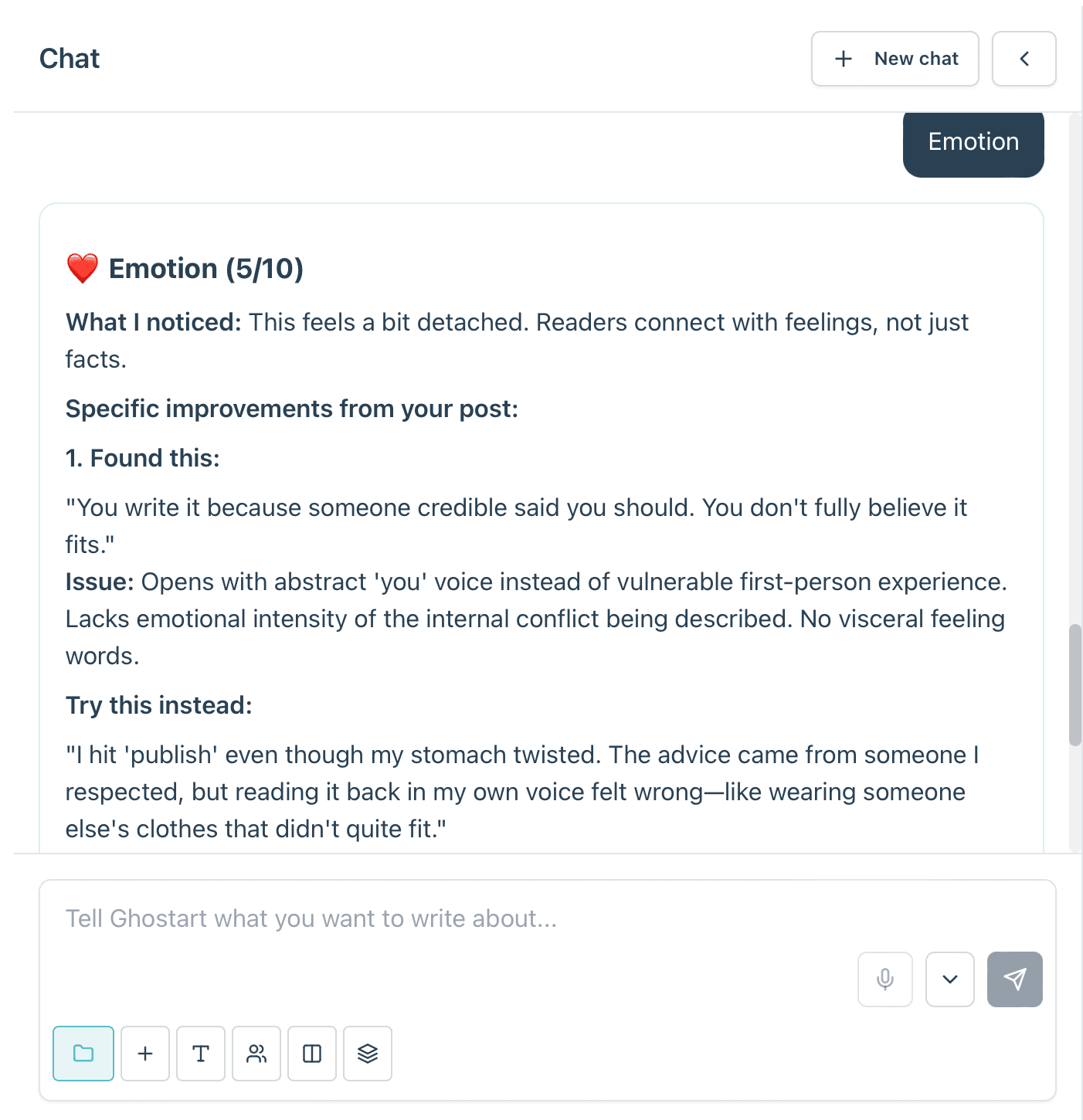

We built the Beige-ometer — a system that detects whether decisions are visible in the writing, across five dimensions we call the Five Senses: Story, Voice, Emotion, Resonance, and Rhythm. Each one is a signal of decision, not a measure of "good writing."

When the Beige-ometer detects that a passage could belong to anyone, it doesn't offer a rewrite. It surfaces the decision the writer is making — often unconsciously — and asks: "You're choosing to keep this general. Here's what that costs, and here's what specificity would give you. Which do you want?"

The writer decides. The system learns.

Made the system learn from use, not from setup

Traditional approaches require upfront profiling — fill in your audience, your tone preferences, your brand guidelines. By the time you've finished the questionnaire, you've lost interest.

Ghostart uses a Second Brain model: a per-user profile that builds itself from how you actually write, what you edit, what you accept, what you reject, and what decisions you make when prompted. Day one, you can write with no setup at all. By week three, the system knows your voice well enough to generate drafts that sound like you thought about them.

The exercises from our employee advocacy work still exist, but as optional accelerators — not prerequisites. They speed up what the system would learn anyway.

Designed for individuals first, then scaled to teams

The individual experience had to work on its own. A single professional writing a LinkedIn post or a blog article needed to get value without any team infrastructure. That was non-negotiable.

But the same system scales to teams. Because the Second Brain is per-profile (not per-user), a ghostwriting admin can write on behalf of someone else with that person's voice and preferences active. Team leads can see quality patterns across their team's content without reading every draft. The Beige-ometer makes "what good looks like" a shared, visible standard — not something locked in a senior person's head.

Expanded beyond LinkedIn to full professional writing

Ghostart started as a LinkedIn content tool. The rebuild expanded it to articles, newsletters, blog posts, and carousels — with publishing integrations for WordPress, Ghost, Medium, and export options for everything else. A single piece of content can be repurposed across formats, with the Beige-ometer checking each version independently. A good blog post doesn't automatically make a good LinkedIn post.

What changed

The individual shift

People who previously stalled at "what should I write about?" started producing content that sounded like them — because the system helped them see the decisions they were already making, rather than generating words for them to approve.

The team shift

Senior reviewers stopped being the quality bottleneck. The Beige-ometer made quality visible and shared, so team members could self-assess before submitting. Approval cycles shortened because the work arriving for review was already distinctive.

The confidence shift

Users reported feeling more confident about their writing — not because the AI made it "better," but because they understood why something worked. The system teaches through use. People who write regularly with Ghostart become clearer thinkers about their own professional voice, even when they're not using the tool.

Why this worked

We didn't start with "let's build a better AI writing tool."

We started with: "Why does professional content sound the same — and why do senior people end up reviewing everything?"

The answer was the same in both cases: decisions weren't visible. Quality lived in a few people's heads. Everyone else was either guessing or playing it safe.

Ghostart makes decisions visible. It doesn't tell you what to write. It shows you what you're choosing, what it costs, and what the alternative looks like. Then you decide.

AI supports that process. It's the intelligence inside the system, not the system itself.

What this proves

Ghostart shows how we approach problems where:

Quality is subjective but real — "good" isn't a checklist, it's whether a decision is visible

Delegation feels risky because standards aren't shared

Tools have been tried but made things worse (more volume, less distinctiveness)

Senior people are stuck in the work because no one else has the clarity to act independently

By designing systems that make invisible decisions visible, we help individuals write with more intention and businesses scale quality without bottlenecking at the top.

What it looks like in practice

The writing environment is deliberately calm — a clean canvas where you think and write, with the Beige-ometer reacting to your decisions in real time. No toolbars, no settings panels, no friction between you and the page.

The Beige-ometer surfaces decisions, not scores. It asks what you want to do, not what you should have done.

Content that comes through the system sounds like the person who wrote it. Not like AI. Not like a template. Like someone who thought about what they wanted to say and then said it.

The Second Brain learns from every interaction — the more you use it, the less the system needs to intervene.

Facing something similar?

If your team's content sounds interchangeable, or if quality still depends on senior people being in the loop — the problem probably isn't the writing. It's that decisions aren't visible.

We can help with that — whether through Ghostart directly, or by applying the same thinking to how your business communicates.